In This Article

Building language models that work reliably in production is more complicated than it sounds. You’re dealing with outputs that change every time, subtle biases that creep in, and those frustrating hallucinations where your model confidently delivers complete nonsense. Traditional testing approaches don’t cut it here because language models aren’t deterministic systems. They need testing frameworks that understand language the way humans do.

That’s where NLP testing tools come in. These platforms let you validate model accuracy, catch bias issues, and verify contextual understanding without writing tons of code. Think of them as quality gates that speak your language, literally. They turn plain English test descriptions into executable validation checks and adapt them as your models change.

This guide covers three platforms that ML (machine learning) teams actually use for language model testing, i.e., Functionize, ACCELQ, and Panaya. Each one brings NLP (natural language processing) capabilities to the table, but each of these approaches the problem differently.

Let’s see which one fits your setup.

How We Have Selected the Best NLP Testing Tools

We built this shortlist based on our November 2025 research across ML engineering communities and verified language model deployments. During our study, our focus stayed on tools that actually test language model outputs, not just the infrastructure around them.

Here are the key selection factors we included during our research:

- NLP-Powered Test Creation: Write tests in plain English instead of code

- Language Model Validation: Check for accuracy, consistency, bias, and most importantly, hallucinations

- Self-Healing Intelligence: Tests adapt when your models or interfaces change

- ML Pipeline Integration: Directly integrates and works inside your training and deployment workflow

- Industry Recognition: Proven track record in AI and ML testing

Top Three NLP Testing Tools

These three platforms handle language model testing with NLP-driven approaches:

- Functionize

- ACCELQ

- Panaya

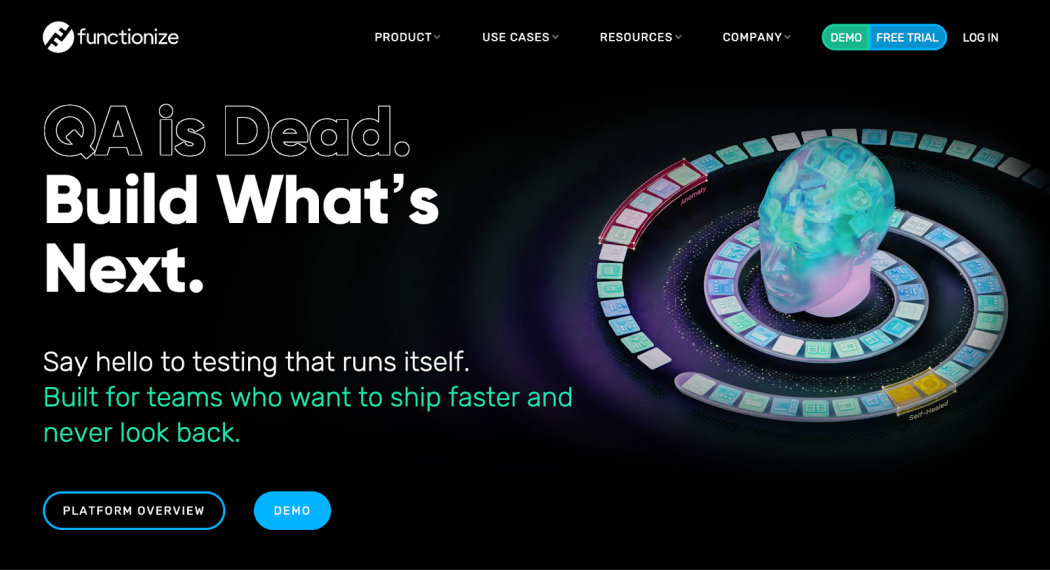

1. Functionize

- Founded: 2014

- NLP Core Capability: Natural Language Processing enables test creation in plain English, which makes language model testing accessible to ML engineers and linguists

- AI Architecture: Combines NLP with machine learning and deep learning for independent test generation, self-healing, and validation of language model output

- Language Model Testing Scope: Processes test requirements in natural language and generates automated test cases to validate accuracy, coherence, and contextual understanding of LLM

- Recognition & Security: Finalist in 2025 AI Awards for Best AI-Driven Automation Solution; SOC 2 certified with continuous vulnerability scanning

Functionize was started back in 2014 as an AI-native testing platform, and since then, they have been refining NLP-based test creation. Their platform can understand how you describe a test in regular English. It then turns that description into an actual executable validation check for your language model.

What makes this whole testing tool interesting is how their NLP engine handles the chaotic parts of language model testing. It can check outputs for factual accuracy, automatically flag potential hallucinations, and even validate whether your model actually understands the proper context.

What’s interesting about Funtionize’s system is that whenever your model changes, the tests adapt automatically because the entire system understands test intent, not just the literal steps.

This whole system works perfectly well in scenarios where your team includes domain experts or linguists who know what an excellent model output looks like but don’t necessarily know how to code. In these situations, they can write tests in English, and Functionize then translates that into technical validation.

Best For: ML teams developing language models who need NLP-powered testing to validate accuracy, coherence, and error-free outputs

Standout Feature: Natural Language Processing engine that translates English test descriptions into automated language model validation while adapting to model changes through intent understanding

2. ACCELQ

- Founded: 2014

- NLP Core Capability: Natural Language Processing engine enables plain English test scenarios to be automatically converted into executable language model validation scripts

- User Adoption: Over 80% of ACCELQ users praise the zero-coding NLP feature, critical for teams without extensive programming backgrounds

- AI Recognition: 2025 AI Breakthrough Award winner for AI-based engineering solution

- Language Model Testing Scope: Handles complex validation logic, including bias detection, contextual accuracy, and edge case testing through natural language descriptions

ACCELQ is a cloud-native continuous testing platform that puts natural language processing at its core. All you have to do is describe what you want to test in plain English. Then its NLP engine converts that description into actual validation scripts that run against your language model outputs.

The platform won recognition from the AI Breakthrough Awards in 2025, and the number of satisfied users backs up that reputation. More than 80% of their users highlight the zero-coding approach as a game-changer, particularly on teams where data scientists and domain experts outnumber traditional QA engineers.

The strength of ACCELQ lies in its ability to handle tricky validation scenarios. Aspects such as bias detection, context-aware accuracy checks, and edge-case testing are managed by natural language models.

In essence, you don’t have to write conditional logic or complex test scripts; all you have to do is describe what sound output looks like, and ACCELQ builds the validation framework based on that description.

Best For: Data science teams testing language models where domain experts and linguists need to validate outputs using natural language criteria

Standout Feature: NLP-driven test creation that converts English descriptions into automated language model validation tests while perfectly handling complex accuracy and bias checks

3. Panaya

- Founded: 2006

- NLP Core Capability: GenAI features enable natural language prompts (“text to test”) for generating language model test scenarios and validating outputs

- Platform Focus: Change Intelligence Platform uses NLP for AI-driven test scoping, particularly effective for enterprise language model implementations

- Enterprise Integration: Deeply integrated with enterprise systems and business logic, with contextual NLP recommendations for language model testing

- Language Model Testing Impact: Provides precise test scoping using natural language to ensure comprehensive coverage of language model accuracy, reducing false positives in production

Panaya combines experience from enterprise app testing into the large language model (LLM) space. Since 2006, they have consistently dedicated time and effort to testing complex systems, and their approach to language models reflects their enterprise-level focus. Their “text to test” capability lets you describe test scenarios in natural language prompts, which the platform then uses to generate comprehensive validation checks.

What sets Panaya apart from its competitors is how its Change Intelligence Platform (CIP) handles context. When you deploy language models into enterprise apps, those models need to understand business logic and domain-specific requirements. Their NLP engine helps translate business requirements into technical validation tests that verify whether your model actually understands the domain.

This is particularly useful for reducing false positives in the production phase. The platform’s natural-language assessment helps ensure you’re testing what matters in your specific business context, not just generic language model capabilities.

Best For: Enterprise teams looking to deploy language models in business apps where they require natural language test generation properly aligned with domain requirements

Standout Feature: GenAI-powered natural language prompts that generate comprehensive language model test scenarios and validate outputs. This helps reduce overall test design time for complex enterprise use cases.

Factors to Consider When Choosing NLP Testing Tools

- Language Model Coverage: Ensure the tool supports your specific language model architecture, whether GPT, BERT, T5, or a custom-built model for your domain. Check whether it can handle your output formats as well.

- NLP Validation Depth: Look at what the tool actually checks. You need coverage for accuracy, coherence, bias, hallucinations, contextual understanding, and those weird edge cases that only show up in production.

- Natural Language Test Authoring: Confirm that linguists and domain experts can write tests without learning a programming language. The NLP capabilities should make testing accessible to the people who understand what sound output looks like.

- ML Pipeline Integration: Test and verify that the tool can connect to and integrate smoothly with your training frameworks, version control systems, and deployment pipelines. You don’t want testing to become a separate, disconnected step.

- Non-Deterministic Testing: It is essential to understand that language models produce different outputs each time. Your testing tool needs to be able to properly validate semantic similarity and meaning, rather than simply matching exact strings.

Final Thoughts

When it comes to large language model validation, all three platforms bring solid NLP testing capabilities. All you need to do is mindfully pick the platform based on your team composition and the complexity of your language model.

If you have domain experts who need to write tests, you should prioritize natural language authoring during your shortlisting process. If your models integrate with enterprise systems, you need to evaluate the platform’s capabilities for contextual testing.

Before committing, make sure you have run enough pilot tests with actual model outputs. See how each platform handles your specific validation needs, including integration with the pipeline and bias-detection requirements. The best tool is the one that can find errors in your environment.